Case study

Rearchitecting for the Agent-Native Era: S2A Design System at Adobe

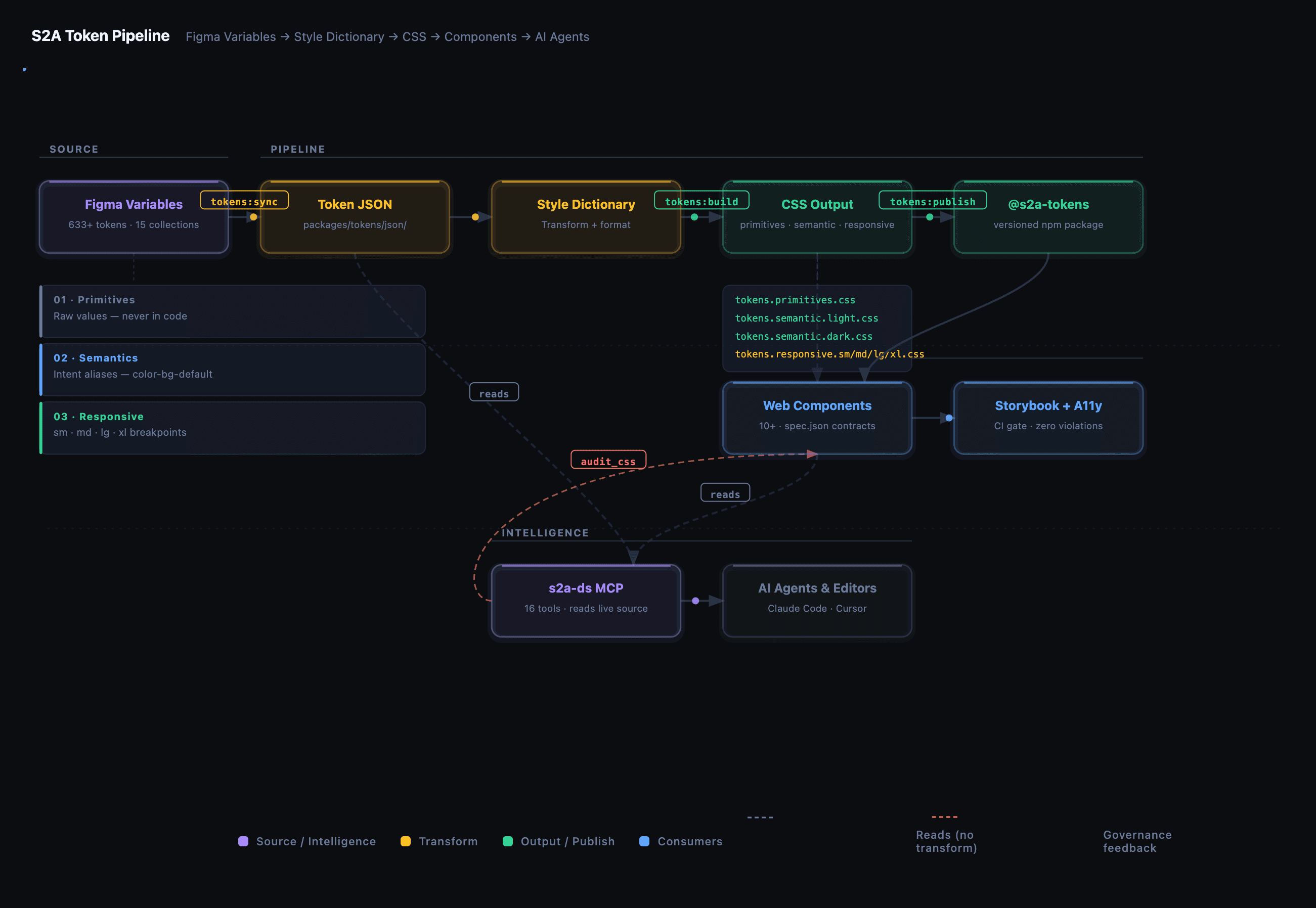

I led the design and build of S2A, Adobe's agent-native design system, from a blank page to a working token pipeline, component library, MCP server, and automated governance layer. The core argument I made and proved: a design system that expresses intent clearly enough for humans is a design system that AI agents can generate from reliably.

On this page

Executive Summary

The most important thing I have learned doing design systems work is this: AI output quality is a direct function of system quality. When tokens are semantic and complete, when components have machine-readable specs, and when governance is automated, agents produce work that is ready for review. When the system is fragmented, agents produce work that has to be rebuilt.

That insight drove every architectural decision in S2A. This was not a component library with an AI feature bolted on. It was a system designed from the start to serve both human designers and AI agents with equal fidelity through the same token layer, the same machine-readable contracts, and the same MCP interface.

The result: a working token pipeline syncing 499 tokens from Figma to CSS, 10+ production-ready web components with machine-readable specs, an MCP server giving designers and engineers 17 tools to query the live system directly, and an automated governance layer that catches token violations before they reach production.

Component documentation that previously took a full day to produce is now generated in a single session. Engineers no longer hunt for the right token. The agent knows. This is not a prototype. It is a working system, shipping against a live product redesign.

The Problem I Was Hired to Solve

Adobe.com had been built by separate teams working in isolation. Different surfaces had different interpretations of brand tokens, spacing, and type scales. There was no shared component library that design and engineering both trusted. When the homepage redesign began, those gaps became blockers.

The deeper problem was structural. Even when individual teams built good components, they did it in isolation. There was no process for formalizing a component into the shared system, no way for agents or engineers to query what existed, and no documentation standard that stayed in sync with source.

The pattern was: decisions made in Figma, rediscovered in code, rediscovered again in QA. Every handoff was a lossy translation. What was needed was not a better component library. It was a rearchitecting of what the system is: from a static artifact that humans maintain to a live platform that humans and agents can both read from and generate against.

My Role

I led the full-stack architecture of S2A, from making the strategic case internally to defining the token layer to shipping the MCP server. This meant driving alignment across design, engineering, and product leadership on what the system needed to be, then building the technical foundation that made that vision executable.

Responsibilities

- Strategic framing and internal advocacy: defining the architecture principle that atoms belong in S2A regardless of where they currently appear.

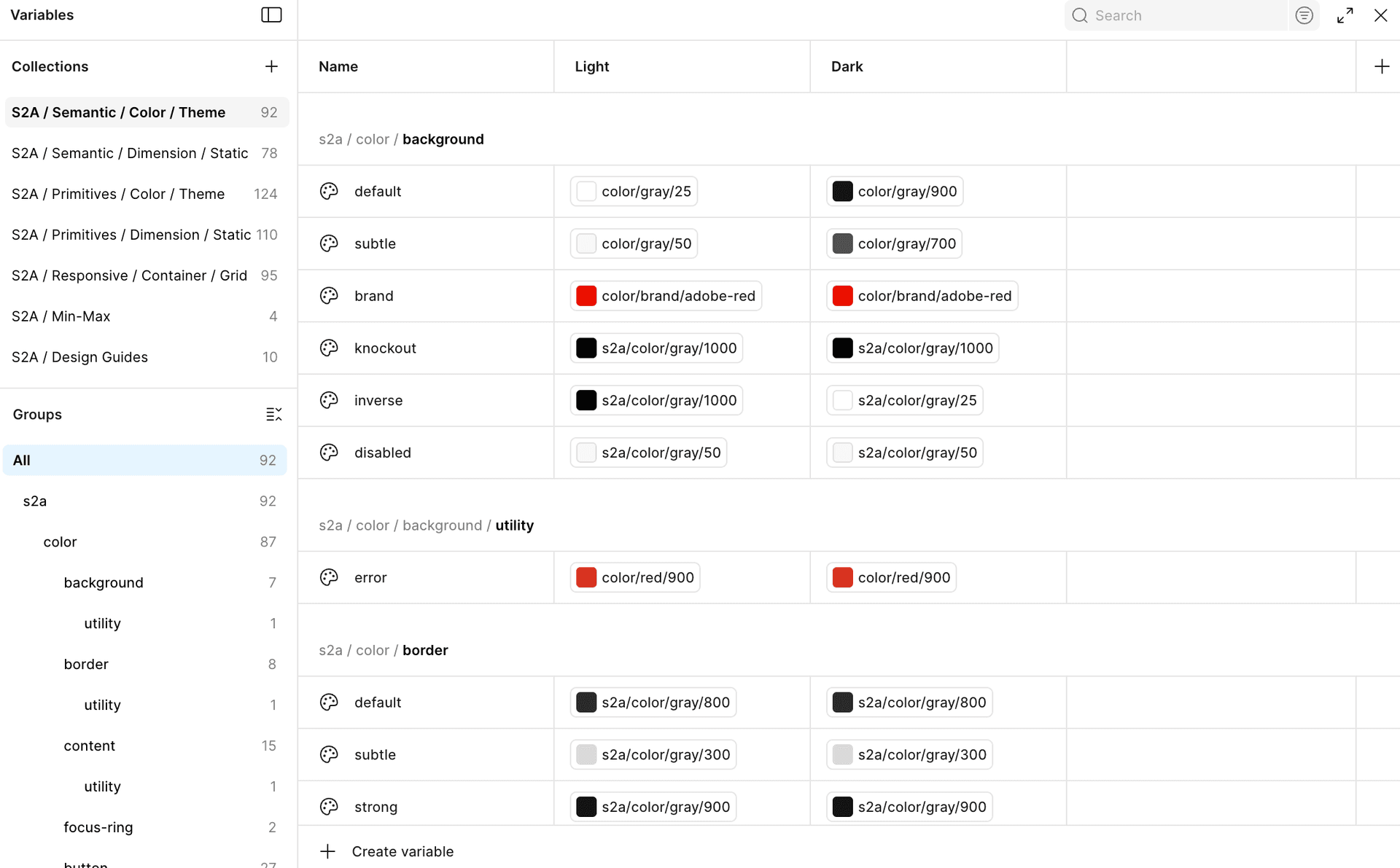

- Token architecture: designing the semantic layer, naming conventions, and multi-mode structure across 7 collections.

- Token pipeline: owning the full Figma Variables to Style Dictionary to CSS output pipeline.

- Component library: implementing framework-agnostic web components with machine-readable spec contracts.

- MCP server and governance: building the query layer and automated audit systems that enforce token standards at scale.

- Documentation and alignment: establishing a 6-sheet in-Figma documentation framework and driving agreement across design, engineering, and product on system contracts.

The Work

Phase 1: Making the Strategic Case

Before writing any code, I mapped the problem and built alignment. The central argument was architectural: if a component can stand on its own and be reused across more than one context, it belongs in the shared system. Components embedded in one-off block implementations are invisible to the system, invisible to other engineers, invisible to documentation, and invisible to agents.

Phase 2: Token Foundation

I designed a three-layer token architecture: primitives for raw values, semantics for intent-mapped aliases such as color-background-default and color-content-title, and responsive mode aliases for breakpoint-specific resolution. The system now has 499 tokens across 7 collections, all synced from Figma Variables through a Style Dictionary build pipeline into versioned CSS output.

The semantic layer is what makes the system agent-readable. When a token says color-content-default, an agent understands the intent, not just the value. A theme change propagates without touching a single component.

Phase 3: Token Pipeline

Tokens being right in Figma does not help engineers if they cannot get them reliably into code. I built the sync pipeline from Figma Variables API to structured JSON to Style Dictionary transforms to versioned CSS output published as @adobecom/s2a-tokens. Figma is the single source of truth, and a change in Figma propagates to CSS without a manual handoff step.

Phase 4: Component Library with Machine-Readable Contracts

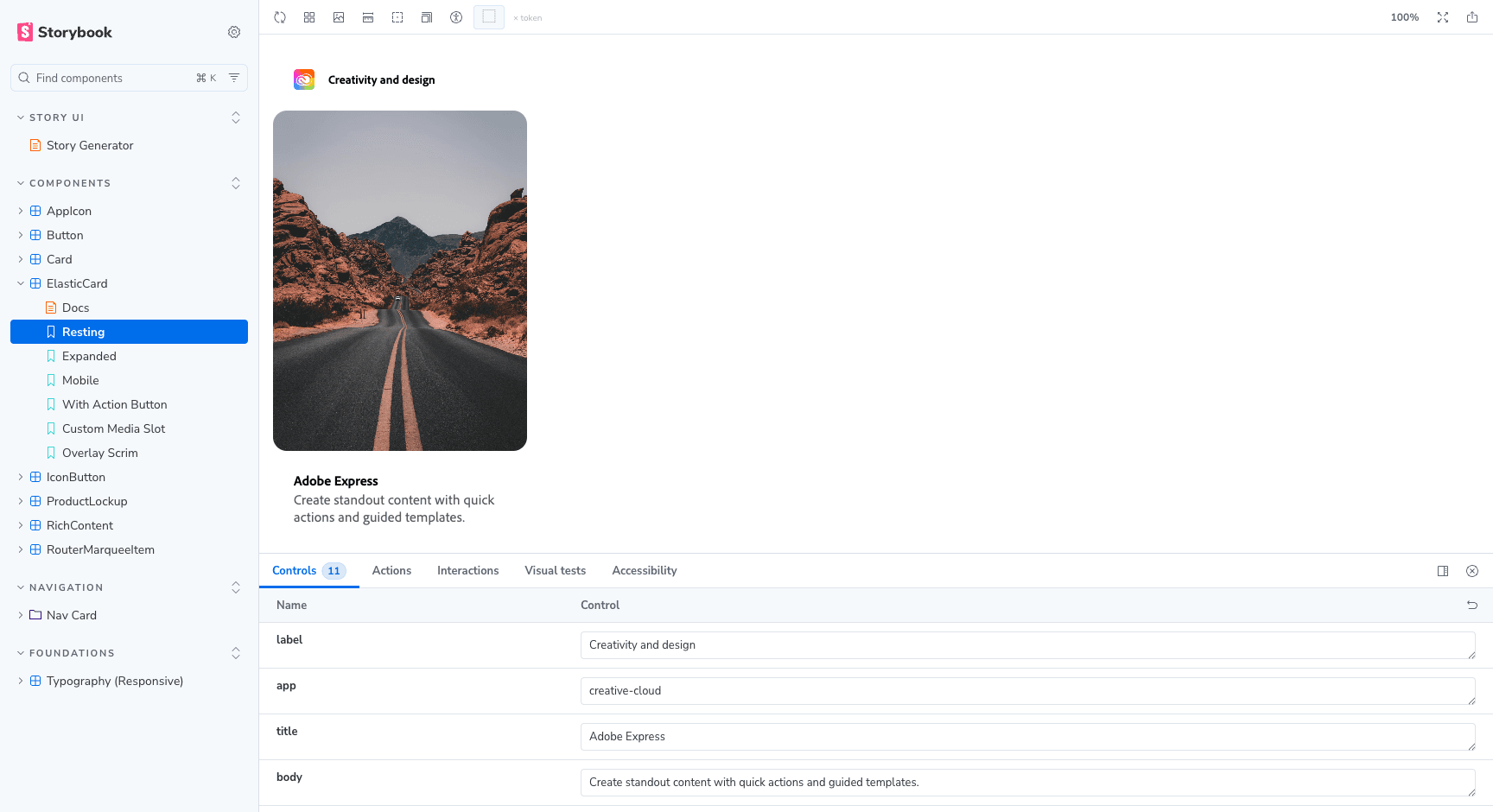

Once the token foundation was stable, I rebuilt components against it. Each component is a web component in plain JavaScript and CSS with no framework dependency. The current library includes Button, IconButton, AppIcon, Card, RouterCard, Media, ProductLockup, ProgressBar, RichContent, and RouterMarqueeItem.

Every component ships with a {slug}.spec.json: a machine-readable contract covering variants, prop types, token bindings, accessibility requirements, and Figma node IDs. These specs are not documentation. They are source. They are what make the MCP server reliable, and what would make a system like Primer queryable by Copilot without a human in the loop.

Phase 5: Accessibility and Inclusive Design

Every component has a Storybook story that serves as a living interactive reference for engineers. Accessibility is built into the component architecture: semantic HTML, correct ARIA roles, keyboard navigability, and token-bound color contrasts meeting WCAG 2.2 AA.

The component spec contracts include accessibility requirements as structured data: role, keyboard interactions, focus behavior, and contrast requirements. Inclusive design review is also built into the workflow through Designpowers at the design phase and Gotrino at the code-review layer, extending accessibility from structure into cognitive, cultural, and linguistic dimensions.

Phase 6: MCP Server

I built and deployed a local MCP server, s2a-ds, that gives developers and AI agents 17 tools to query the live design system directly from their tools: resolve tokens by name or CSS property, inspect component schemas, validate CSS against the token system, and generate tech specs directly from component source.

The server reads from actual component source files and token JSON, not a separate documentation layer. When the component changes, the MCP immediately reflects it. There is no stale documentation problem because there is no separate documentation layer to go stale.

Phase 7: Automated Governance

I built automated governance into the MCP layer. The audit_css tool scans any CSS file, detects token violations such as hardcoded hex and rgba values, primitive token usage that should alias through semantic tokens, and hardcoded dimensions with exact semantic matches, then returns a severity-graded report with remediation for each violation.

This runs on demand from any editor session. Engineers get the report before code review. Designers can audit a component CSS before handoff. The system enforces its own standards through tooling.

Results

- 499 tokens across 7 collections, synced from Figma Variables to CSS with no manual handoff step.

- 10+ web components with full semantic token bindings and machine-readable spec.json contracts.

- 17 MCP tools covering token resolution, component schemas, CSS validation, and spec generation.

- Automated governance: any CSS file auditable in under a minute, severity-graded with remediation guidance.

- 6-sheet documentation standard for every component, generated in Figma against live tokens rather than written manually.

- Accessibility and inclusive design embedded across structural specs, design-phase review, and code-review support.

- Token lookup, component API reference, and tech spec generation available to engineers and AI agents directly from their editor, without a human handoff.

What This Means for Mature Design Systems

The challenge for mature design systems is no longer just component coverage or documentation quality. The next challenge is whether the system can be understood and used by both humans and AI agents without losing design intent.

The architectural path is clear.

- Semantic token layer: tokens that express intent, not just value, so an agent understands what color-content-default means.

- Machine-readable component contracts: structured specs for variants, prop types, token bindings, accessibility requirements, and implementation constraints.

- MCP as the interface layer: a queryable API over the live system so agents can access the source of truth without a human translation step.

- Automated governance: tooling that enforces standards at the point of authorship, so generated UI can be checked before it reaches a human reviewer.

The scale difference between a single product surface and an enterprise-wide platform is real. That is why the architecture matters. Semantic tokens, machine-readable contracts, and MCP interfaces are horizontal systems. They do not require proportional headcount as adoption grows. The governance layer scales because the system can enforce its own rules.

Key Design Decisions and Why They Scale

- Semantic tokens, not raw values: a single theme change propagates across the system without touching components; agents understand intent, not just resolved values.

- Plain web components, no framework: works in any rendering environment; no framework lifecycle to coordinate across enterprise adopters.

- Machine-readable specs: the contract between design, engineering, and AI agents. Not documentation, source.

- MCP reads live source: no separate data layer to go stale; the server reflects actual component files and token JSON at query time.

- Governance as tooling, not policy: automated audit enforcement means standards hold at scale without a human reviewer in every loop.

- Documentation generated, not written: doc sheets produced by tooling against live tokens; fast to produce, accurate by construction.

Lessons

The work taught me what I would argue is the central design challenge for systems in an agent-native world: the guardrails you build for humans are the guardrails that make AI output trustworthy. Accessible components, semantic tokens, structured specs, and automated governance were not AI features. They were system quality decisions.

The pattern does not scale if the atom library is incomplete. Every component that lives outside the system is invisible to agents, invisible to governance, and invisible to the documentation layer. That is not a tooling problem. It is an adoption and alignment problem, which is why the advocacy and cross-functional work is as important as the technical architecture.

What's next

- Component generation: scaffolding a full Figma component set from a spec file.

- Block generation: composing atoms into blocks, grounded in the live library.

- Figma spec validation: diffing a live Figma design against its component spec, catching drift before engineering handoff.

- Org-wide adoption: raising the team's fluency with agent-native workflows through workshops and tooling demonstrations.